Monetise This! Conspiracy Entrepreneurs, Marketplace Bots, and Surveillance Capitalism

Feb 17, 2021 —

In previous posts, we have approached conspiracy theories mainly in terms of their discursive or epistemological qualities and as forms of dis- and misinformation rooted in and expressive of a political and social context. In this post, we want to consider the different ways in which conspiracy theories are monetised and commodified today and how these might shape any response to the “problem” of online conspiracy theories.

Conspiracy Inc.

Though there are considerable issues with Richard Hofstadter’s term, “the paranoid style” (1964) because of the way it pathologises political opponents and expresses distaste for the masses, it is helpful when approaching commodification. It reminds us that conspiracism can always be a glossy surface, an affectation or stance to be imitated, a taste rather than a core belief. It can be style over substance. As such, it can be iterated to various effects, repeated in “loyal” and “disloyal” ways, employed straight or with irony, invoked with hope or nihilism, circulated by true “believers” or opportunists, distributed as misinformation (unwittingly shared falsehoods) or disinformation (knowingly shared falsehoods). In light of this radical freedom from the context, belief or intent of any “original”, a style can take on material, cultural forms that one can literally purchase, rather than (as with a belief) only metaphorically “buy into”.

Conspiracism and the paranoid style have been involved with processes of commodification for some time now. For example, Mark Lane’s conspiracist take on the assassination, Rush to Judgment, published in 1966, spent 29 weeks on the New York Times best-seller list. A veritable industry of books questioning the lone gunman theory grew in the years that followed. It even spawned early (and far less cynical) examples of conspiracy entrepreneurs such as the housewife-turned-radio-host, Mae Brussell, whose syndicated show, “Dialogue: Conspiracy” (later renamed “World Watchers International”) centred on conspiracy theories about JFK.

In terms of highbrow fictional cultural production, a whole genre of conspiracy film influenced by events like the assassination of JFK and the later Watergate scandal evolved in the 1970s onwards. Literary fiction by authors like Joan Didion, Don DeLillo, Thomas Pynchon, and Ishmael Reed, became infused with new reflexive forms of paranoid thinking. While the Cold War fear of communism also produced its cultural commodities, the cultural paranoia that developed after the JFK assassination diverged from the “official” government narrative, commodifying distrust of those very institutions previously meant to provide ontological security from the threat identified. Paranoia became more “a default attitude for the post-1960s generation, more an expression of inexhaustible suspicion and uncertainty than a dogmatic form of scaremongering” (Knight 2000, 75). The ubiquity of a postmodern paranoia meant that all kinds of high cultural forms were infused with its sensibility, but it was not yet part of a fully-fledged mass market.

In 2000, Peter Knight recognised that “a postmodern form of paranoid skepticism has become routine in which the conspiratorial netherworld has become hyper-visible, its secrets just one more commodity” (2000, 75). His book documents the ways in which a marginal paranoid style evident in the political realm during Hofstadter’s time became suffused into the culture at large over the ensuing decades. Indeed, the 1990s drew on the still somewhat sub/countercultural or, in the case of literature, high cultural status of conspiracy theory and postmodern paranoia and turned them into popular forms of mass culture. The X-Files (Fox, 1993-2002 and 2016-18) offers the best-known example. This long running TV show packaged its conspiracy theory about a deep state faction within the US government and its coverup of an extra-terrestrial invasion in a unique blend of conspiracy thriller and speculative genres (horror and science fiction). The show had wide-spread appeal by mixing tones of sincerity and irony. Galvanised by this show and countless television documentaries, conspiracy theories became part of the (pop) cultural conversation, even while they might have had less political influence, so that theories about JFK, Roswell, alien abductions, black helicopters, AIDS, flat earth, and the illuminati had common currency.

By 1997, the box office flop, Conspiracy Theory (Dir. Donner), could use the signifier without any explanation. The film failed not because it assumed a readymade audience, but because it did not incorporate any of the postmodern paranoia, playfulness, self-referentiality, and irony that rewarded consumers of other conspiracy texts. It is an overly literal interpretation of a phenomenon that had come to primarily resonate, in such fictions, at the allegorical level. The film is nevertheless notable because it registers conspiracy theory as a marketable category rather than either as purely political rhetoric (as Hofstadter would have it) or as a countercultural or subcultural marker. The committed Cold-Warrior or paranoid stylist that Hofstadter identified had “become an armchair consumer of The X-Files in the 1990s” (Knight 2000, 45).

The popularity of conspiracy fictions, earnest or playful, continues to the present day. Following and extending The X-Files’ successful formula, sophisticated conspiracy narratives such as Watchmen (HBO, 2019), Westworld (HBO, 2016-), Lost (ABC, 2004-2010), and The OA (Netflix, 2016-19) reward avid fans and attentive audiences with so called easter eggs—self-referential gifts that encourage intense hermeneutic activity, online fan semiotic production, and opportunities to buy associated merchandise. But a new development in the commodification of conspiracy theory has emerged in the last two decades because of the democratisation of digital production and broadcasting, the opportunities for self-promotion offered by social media platforms, new avenues for monetisation online, and an emboldened populist politics that encourages conspiracist subjectivities that can be affirmed through forms of consumption. In this post, we will examine these developments in the context of a continuously evolving conspiracy market seeking ways to monetise conspiracy theories. In its most recent manifestation, stoked by a strand of populism that capitalises on ethnonationalism and associated feelings of relative depravation—“a sense that the wider group . . . is being left behind relative to others in society, while culturally liberal politicians, media and celebrities devote far more attention and status to immigrants, ethnic minorities and newcomers” (Eatwell and Goodwin 2018)—this market promises “armchair consumers” of entertaining conspiracy fictions that they can be conspiracist “prosumers” (Ritzer and Jergenson, 2010). While conspiracists online can certainly become active in the co-production of alternative cosmologies through social media and other community-forming platforms, only certain online conspiracists will make money from this activity.

Conspiracy Entrepreneurs

As we noted in a previous blog post, under neoliberalism, the figure of the entrepreneur has increasingly focused on the self as the prime enterprise-unit. Michel Foucault writes, “the stake in all neo-liberal analysis is the replacement every time of homo œconomicus as a partner of exchange with homo œconomicus as entrepreneur of himself, being for himself his own capital, being for himself his own producer, being for himself the source of [his] earnings” (Foucault 2008, 226). The rational management of one’s own human capital involves not renting oneself out for wage labour, but rather investing in oneself in order to constantly be at an advantage within the market. This ‘rational actor’—this new and improved homo œconomicus—is able to thrive amongst the ruins of liberal democracy through modes of self-reinvention and self-exploitation.

Sean O’Brien, one of the research assistants on the Infodemic project, points out that given this hyperrational attention to self-exploitation and modulation, we might consider the entrepreneur of the self as the opposite of the hyperirrational conspiracy theorist. While the entrepreneur nimbly ascends the ladder of opportunity, constantly optimising him/herself as a commodity, the conspiracy theorist is associated with downward class mobility and stuck in forms of negative, enervating grievance. Both subjects seem to emerge out of precarity—job insecurity and increased exposure to risk—but one is a resilient go-getter, willing to align their sensibility with the market, and therefore beating the system at its own game, while the other becomes paralysed within loops of paranoid logic and feels defeated or controlled by invisible forces. Both subjects also exude a kind of anti-state sensibility: the entrepreneur because s/he thrives in unregulated spaces of capital where each person is responsible for themselves; the conspiracy theorist because s/he fears being thwarted by its machinations. In fact, these figures are not so far apart. The commodification of conspiracy theory today is led by conspiracy entrepreneurs whose personalities and experiences are central to their market success. They combine a conspiracy theorist sensibility, a traditional entrepreneurial spirit, and the neoliberal entrepreneurialism of the self which fashions personhood as an enterprise. William Callison and Quinn Slobodian call such figures “agents of disinfotainment” who deal in “gig conspiracies for the gig economy.” The force of the entrepreneurial imperative of neoliberalism extends to monetise even an apparent counterforce to it, but it also reaffirms the gap between producer and consumer that the affordances of digital media otherwise promise to break down.

The flow of neoliberal entrepreneurialism into the conspiracist landscape is both fitting and surprising in terms of ideology. On the one hand, many Anglo-American conspiracy entrepreneurs are aligned with a neoliberal self-responsibilised subjectivity that finds itself in market relations with others rather than within the social field of the state. But because this often manifests as far-right or libertarian ideas of sovereign citizenship and extreme forms of individualism, in which the government and/or elites are blamed for one’s woes, such a position diverges from globalised neoliberal marketisation. The most ambitious right wing conspiracy entrepreneurs today therefore find themselves having to reflect a revanchist or xenophobic politics, while trying to appeal and trade across national boundaries.

Conspiracy entrepreneurs and influencers profit from conspiracist merchandise and broadcasting in ways that “hucksters” and “quack doctors” have been doing for years. But there is a difference. As “alternative influencers”, having to operate in a digital attention economy, their identities are extensions of the commodities being sold. They themselves are brands that have to quickly adapt to emerging conspiracy narratives and developments. Some conspiracy entrepreneurs attain the status of conspiracy guru. As such, they create complex conspiracy cosmologies and, on the back of this, sell books, merchandise, and services. Such status is reserved for those conspiracy entrepreneurs most able to adjust their provision to the desires of the market or who can stay in the game for the longest time.

In the same way that fake news sites are often aesthetically indistinguishable from legitimate news outlets because of lowered costs to and ease of use of publishing packages, the marketplaces produced by the most successful conspiracy entrepreneurs and gurus closely resemble any other online market space. While a Do It Yourself, anti-establishment aesthetic might work for certain forms of populist provision, these web interfaces employ high production values and frictionless consumer experiences. On David Icke’s website, for example, users can follow breaking news with a conspiracist twist, navigate to the chat forum, subscribe to Ickonic—Icke’s conspiracist streaming service—for £9.99 a month and purchase Icke’s books, tickets for events, or pro-biotics. Advertisements for various conspiracy-adjacent media, services, and products adorn the page. The pandemic, and Icke’s conspiracy theories about it, have been good for business. One report shows that traffic to davidicke.com increased to 4.3 million in April 2020 from 600,000 in February of the same year. Anticipating our turn to the role of platforms themselves, it is important to note that 31 per cent of that traffic came from social media websites. Icke’s book sales and public speaking have been a lucrative source of revenue over the years. The Telegraph reported that sales for a single show during an international speaking tour totalled £83,000. Equally, from a case Icke brought against the US distributor of his books in 2008, it is clear that sales figures in America alone were in the millions of dollars. (However, less impressively, the most recent filing to Companies House in the UK tells us that equity in Ickonic Enterprises Inc. amounted to £194,589 in the tax year 2018-2019, which seems less than those other figures would suggest).

Conspiracy theory veteran Alex Jones attracts followers to his InfoWars platform through conspiracist content (news items and a live stream of the show) but makes most of his money through online sales. Jones hawks an extensive range of survival gear (including prepper food), t-shirts, conspiracist videos, wellness supplements, and unverified cures including, until the FDA demanded they be removed in April 2020, many products containing colloidal silver, which Jones claimed in a live stream on the 20th of March, 2020, could kill “the whole SARS-corona family at point-blank range”. Such products are “intended to assuage the same fears he stokes”. The InfoWars website attracts more than 8 million visitors each month according to Quantquast and more than two-thirds of his funding comes from selling his products.. The interlinked companies that make up InfoWars do not publicly report their finances, but the New York Times has reported on its finances as of 2014: “One entity—created to house the supplements business—generated sales of $15.6 million and net income of $5 million from October 2013 through September 2014 . . . During the same period, another entity, possibly recording overlapping revenues, listed net income of $2.9 million and sales of $14.3 million, with merchandise sales accounting for $10 million, advertising for nearly $2 million and $53,350.66 in donations, according to an unaudited company statement.” While it is true that these figures regarding site traffic and profits were gathered before Jones was deplatformed by various social media sites in 2018 and 2019 (decisions that will have certainly curtailed revenue Jones would have earned from those sites and may have reduced traffic to the InfoWars website and online store), it is also possible that the pandemic has increased traffic to Jones’s site given the turn towards conspiracist content and alternative answers in the face of a global crisis. As we have seen, the latter was certainly the case for Icke.

While Icke and Jones are two of the most prominent conspiracy entrepreneurs, their digital offering is replicated at more modest scales across the internet. One group of MA student researchers at King’s College London (Jingyi Chen, Wei-Lun Huang, Haoxiang Ma, Hongyi Ren, Haiqi Zhang) considered the profiles and homepages of 102 YouTube conspiracy influencers. Many of these influencers have homepages that use various monetisation strategies: the researchers found that 56 percent of the influencers offer goods or services for sale, while 41 percent offer memberships and subscriptions using direct payments through PayPal, crowdfunding sites like Patreon, or cryptocurrencies like Bitcoin.

Dustin Nemos (whose real name is Dustin Krieger) is one such influencer seeking to maximise profits. In his thirties, Nemos belongs to a different generation to veterans like Icke and Jones and a relative newcomer to the conspiracy marketplace. Mixing the populist and pretentious, the folksy and fanciful, Nemos describes himself as “a freedom-maximalist, Voluntaryist, Autodidact Polymath, Husband, Father, Entrepreneur, Farmer, Trend Watcher, Avid Researcher and hobbyist Economist, holistic researcher, Philosopher, and Political Talking Head” [erratic use of caps in original]. Despite these eclectic interests, his offering does not veer too greatly from the “conspiritual” cocktail of anti-government, anti-elitist conspiracy theory and alternative (veering towards “new age”) remedies mastered by Jones and Icke. (The term “conspiritual” is from Charlotte Ward and David Voas (2011) and is useful for thinking about how the conspiracy entrepreneurs under consideration here move between and help to merge different and sometimes apparently incompatible markets).

Unlike Jones and Icke, however, Nemos focuses on one, albeit far-reaching and all-encompassing conspiracy theory: that of QAnon. His co-authored book, QAnon: Invitation to the Great Awakening, rose high in Amazon’s categories for “Politics” and “Censorship” and appeared on its “Hot New Releases” section on the landing page in March 2019 before being banned in January 2021. He also sells ingeniously branded products such as his “Sleepy Joe Supplements” and “Great Awakening Coffee” because QAnon merchandise acts as an extension of online research, “[binding] adherents to the conspiracy theory just as powerfully as do memes and online catchphrases” according to Lisa Kaplan of the counter-disinformation consultancy, Alethea Group. Nemos is intent on making the leap from online discussion to purchasing goods as seamless as possible. Even the name of Nemos’ site, Red Pill Living, is a cliché of originally the deep vernacular web “manosphere” (Nagle 2017, 88) and subsequently surface and deep vernacular conspiracist web spaces. Crucially, this is Red Pill Living—Nemos is trying to sell a lifestyle, not simply individual commodities. In this way, Nemos tries to foster loyal communities that can offer ongoing financial support, rather than one-time customers. Amusing though the labels are, the products need to be more than a gimmick if Nemos is to secure repeated sales. After all, one bag of Great Awakening coffee might make a witty gift, but the brand needs to resonate on a more sincere level to turn a profit.

Nemos’ site positions itself as on the side of all kinds of freedoms: freedom of information, freedom of speech, and what it calls medical freedom: [“the right to be informed about health, and make the right decisions for their own health—without being told what they can or cannot do by overzealous or corrupted bureaucrats”] (https://www.redpillliving.com/we-believe/). As we have seen to be the case for other conspiracy entrepreneurs, Nemos creates alarm over such infringements, capitalising on the deep frustrations QAnon and Covid-19 scepticism tap into, while offering apparent solutions on the same website. He will reveal the “truth” about election fraud, Covid-19 vaccinations, and social media censorship. In terms of health freedoms, he tells customers that “it starts with the highest quality, vetted holistic and health products on the planet,” sold on his platform. Through this marketplace, Nemos offers customers the liberty to assert freedom through consumption.

Nemos was once able to use various social media platforms to direct traffic to his online store. However, he has been deplatformed during purges of QAnon related accounts on the major social media platforms on various occasions and barred from the crowdfunding platform Patreon. Since the storming of the Capitol in January 2021, Nemos’ merchandise has also been de-listed by Shopify and his QAnon book removed from Amazon. Consequently, he has been forced to move to less mainstream and less lucrative social media options like Bitchute and Parler, and use the less well-known crowdfunding site, Donor Box. While Nemos has attained Bitchute badges for having over 10,000 subscribers and over a million views, suggesting he must receive some income from viewers through its “tip/pledge” button and direct traffic to his marketplace, research into the revenue opportunities of alternative social media sites points out how difficult it is to replicate the rewards of mainstream social media. The organisation Hope Not Hate, for example, collated remarks by alt-right figures such as Milo Yiannopoulos on their reduced influence. Yiannopoulos claims to have lost 4 million followers during a round of purges on mainstream social media and says that he cannot replicate that success on platforms such as Telegram and Gab: “I can’t make a career out of a handful of people like that. I can’t put food on the table this way”. He complains that “None of [these platforms] drive traffic. None of them have audiences who buy or commit to anything.” Richard Rogers reports that “when Alex Jones was banned from Facebook and YouTube, his InfoWars posts, now only available on his websites (and a sprinkling of alternative social media platforms), saw a decline in traffic by one-half” (2020, 215; drawing on Nicas 2018). Assuming attention and traffic translate into financial gain, such measures are significant. Indeed, Nemos told reporters from Reuters that he had lost between one and two million dollars in revenue because of the crackdowns.

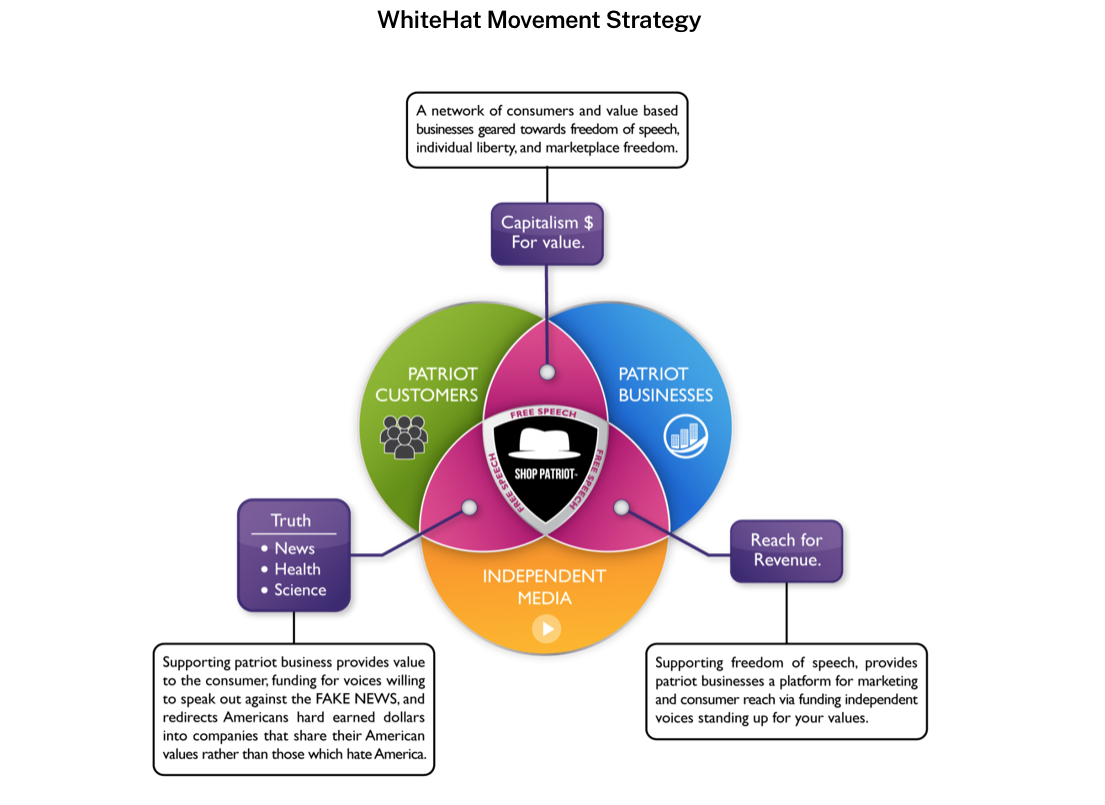

However, in promotional material, Nemos contradicts this admission and boasts of having tripled his income since being deplatformed by YouTube by creating the WhiteHat Movement—a network of businesses and services that identify with “patriot values” and want to support and advertise on the sites of deplatformed figures (whom he refers to as “independent media voices”. Before the end of Trump’s presidency, there were nine businesses listed, but once Biden took office, Magazon (an online marketplace dedicated to all things Trump) and an associated company were no longer listed and their sites no longer functioning. While Nemos’ own marketplace uses a similar recipe to those belonging to more established conspiracy entrepreneurs, his turn to this ambitious venture is notable whether it is as successful as he claims or not (and it is hard to think that seven businesses can achieve the alternative business network and consumer experience Nemos imagines nor the profits he claims).

Fig. 1: Screenshot, “WhiteHat movement strategy”, from Dustin Nemos

Clearly, Nemos’ vision has not been realised. Nevertheless, his venture indicates a shift in conspiracy entrepreneurialism as it attempts to exploit the populist wave to ask businesses to identify under a political banner and steer would-be supporters towards a branded consumer experience, creating what Nemos grandiosely calls the “patriot economy” (https://www.whitehatmovement.com/redpilled-profits/). Just as we might find some consumers looking for signs of ethical or green merchants to ensure that their shopping experiences align with their values, Nemos is trying to establish a network of online services and marketplaces that subscribe to “freedom of speech, individual liberty, and marketplace freedom” as he frames it in the promotional literature. The WhiteHat Movement’s tagline is “Support free speech—shop patriot.” In addition, Nemos has made several attempts to branch out beyond the marketplace and WhiteHat. The Washington Post reports that he “has also sought to create a health insurance company trading on the “Make America Great Again” slogan, as well as an independent cellphone service, according to Alethea Group and GDI, which cited leaked logs from the social media site Discord.”

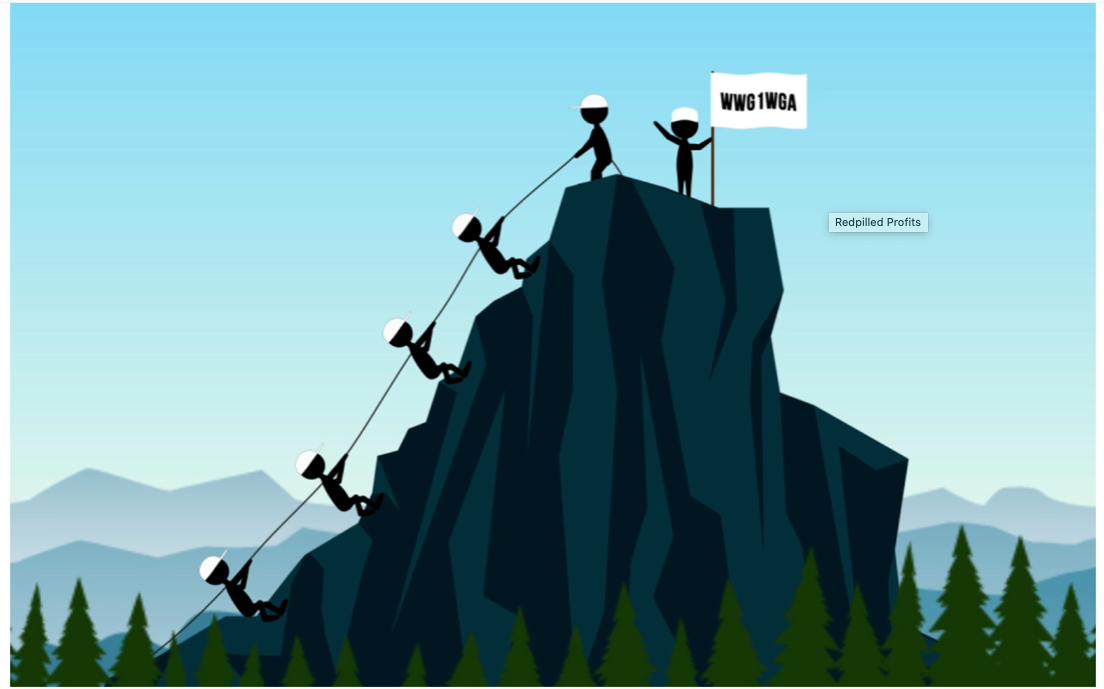

I want to pause on a rudimentary but telling illustration that Nemos uses to accompany an account of Red Pill Living’s profits in promotional literature for the WhiteHat movement.

Fig. 2: Screenshot, “Redpilled Profits” from Dustin Nemos

In the Western movie genre, white Stetsons delineate the admirable and honourable hero; consequently, “white hat” crops up in deep vernacular web spaces to mark out “good guys” or patriots. The trope has also been used to refer to ethical hackers, which is not dissimilar to the way in which Nemos presents himself to prospective collaborators: as an insider who understands the various online factions of the patriot and conspiracy communities and can use this knowledge to good effect. The illustration implies that noble WhiteHat-affiliated companies can help each other succeed by working together (though it might unintentionally connote an arrangement more akin to a pyramid scheme). By citing the QAnon rallying cry “Where we go one, we go all” in this context, Nemos explicitly seeks to connect a statement of solidarity among believers of a conspiracy theory with a business opportunity. He is using the vernacular and logic of QAnon to create alternative economies and shape consumer experiences. Nemos presents his “patriot economy” as playing its part in the great awakening—after all, patterns of production and consumption, and the economy in general, are a part of the consensus reality that has been challenged by QAnon and other conspiracy theories. It follows that a challenge to reality involves changes to commerce.

Echoing ethnonationalist cries heard in conspiracist populism that great swathes of Americans have been left behind economically, Nemos describes his venture as a “Patriot-First marketplace”. A clear dog whistle tactic, Nemos presumably knows that 90 percent of core Trump supporters believe that “discrimination against whites is a major problem in America” (Eatwell and Goodwin 2018). The “patriot” will be prioritised in Nemos’ vision—he or she will be first in line. Just as the alt-right has appropriated so many progressive arguments and tropes, Nemos’s idea of “patriot-first” inverts the redistributive goals of racial justice and even programmes like affirmative action. Nemos wants to construct a trading network that privileges the desires of, and rewards for, right-wing, white Americans (who have commandeered all talk of patriotism).

These marketplace examples and business ventures tell us that the commodification of paranoid styles and conspiracy theories now reaches beyond products (whether goods or media content). Today, conspiracy entrepreneurs attempt to use identifications with conspiracism to develop producer and consumer pathways and loyalties that can be translated into profit in various ways. We know that conspiracist media can change the way people perceive reality, but I want to suggest that it can also guide modes and patterns of production and consumption (as well as “prosumption”).

Crowdfunding: affective patronage and digital tithing

Deplatforming makes it more difficult for conspiracy entrepreneurs to ensure a steady stream of traffic to their marketplaces and eliminates the opportunity to receive payments from the platforms themselves (such as revenue from AdSense on YouTube) or from supporters on hegemonic platforms (using, for example, Facebook’s “creator” or “fundraiser” tools or selling goods to supporters using Facebook’s shopping facilities). Therefore, conspiracy entrepreneurs have come to rely on direct donations from supporters using bespoke fundraising services. Though we might associate it more with charity initiatives, or with entrepreneurs seeking to raise funds from communities rather than venture capitalists, “crowdfunding” is the contemporary term for raising money in this way.

Many creatives and content producers use sites like Patreon to process voluntary contributions, given the difficulties of monetising online content without installing paywalls. While most crowdfunding sites process single payments, Patreon asks donors to commit to a monthly contribution, meaning that it is an ideal solution for those wanting to generate a regular income. It was, therefore, popular among conspiracy entrepreneurs before the platform cracked down on QAnon-related ventures and other varieties of misinformation in October 2020. The appeal of this mode of financing for supporters is that they feel directly involved in the success of their chosen conspiracy content producer. Donors are flattered by the allusion to a venerable history of arts patronage whereby figures of influence and wealth provided security for creatives. However, while crowdsourcing sites like Patreon might appear to cut out any third party, creating an affective bond between patron and content creator, the site itself is a third party keeping a percentage of the income—Patreon keeps between five and twelve percent of donations depending on the package.

Crowdfunding can be lucrative. One conspiracy entrepreneur going under the name of Neon Revolt raised $150,000 to publish a QAnon book and raised £115,000 on IndieGoGo for pre-orders in the UK In terms of the conspiracy entrepreneurs I have considered in this article, they use a variety of methods to solicit regular and direct donations. Icke points users towards the Ickonic monthly or yearly subscription to access premium content. Jones asks supporters to sponsor the InfoWars project as a recurring commitment or one-off payment ranging from $25 to $1000 by using its own credit card payment system. Such schemes eliminate the necessity of a crowdfunding site and allow conspiracy entrepreneurs themselves to retain more of the profits. Because of various bans by payment platforms and Patreon, Nemos News Network has resorted to asking for donations by mail but, as mentioned above, Nemos also has a donation page on Donor Box. Moreover, those wishing to purchase Great Awakening Coffee on Red Pill Living can do so on a monthly subscription basis and pay for this through Visa Inc-owned Authorize.net using major credit card networks.

Rather than patronage, which suggests a bestowing of a gift upon someone less affluent or powerful, it might be more accurate to think of the crowdfunding of conspiracy entrepreneurs as a form of faith-based tithing. Tithing—a regular offering, traditionally ten percent of earnings, to the Church—features in most Abrahamic religions. It demonstrates commitment to God and adherence to guidance in the Bible. Some conspiracy theories like QAnon borrow from evangelical language and have been likened to a religion or cult. While it is beyond the scope of this blog post to consider that analogy in depth, it gives us a way to understand the support some people offer conspiracy content makers through donations. When a conspiracy theory like QAnon or Icke’s convoluted conspiracy cosmology offer meaning and purpose to those who believe, making financial contributions becomes a self-interested investment rather than an act of charity. Donors are ensuring the continuance of the world view in which they are so heavily invested. They are feeding their faith.

The Myth of the Non-conspiracist Marketplace

Above, we remarked on how the marketplaces belonging to conspiracy entrepreneurs use high production values to rival those of more mainstream marketplaces. However, that would suggest that mainstream e-commerce sites are free of conspiracy content. This is far from accurate. Plenty of products relating to conspiracy theories are available on Amazon and, before belated (and incomplete) action was taken by Amazon and other marketplaces, it also sold a great deal of QAnon merchandise. Third party sellers on Amazon, for example, offered more than 8,000 individual QAnon-branded products in Autumn 2020, according to an analysis conducted by Alethea Group and the Global Disinformation Index.

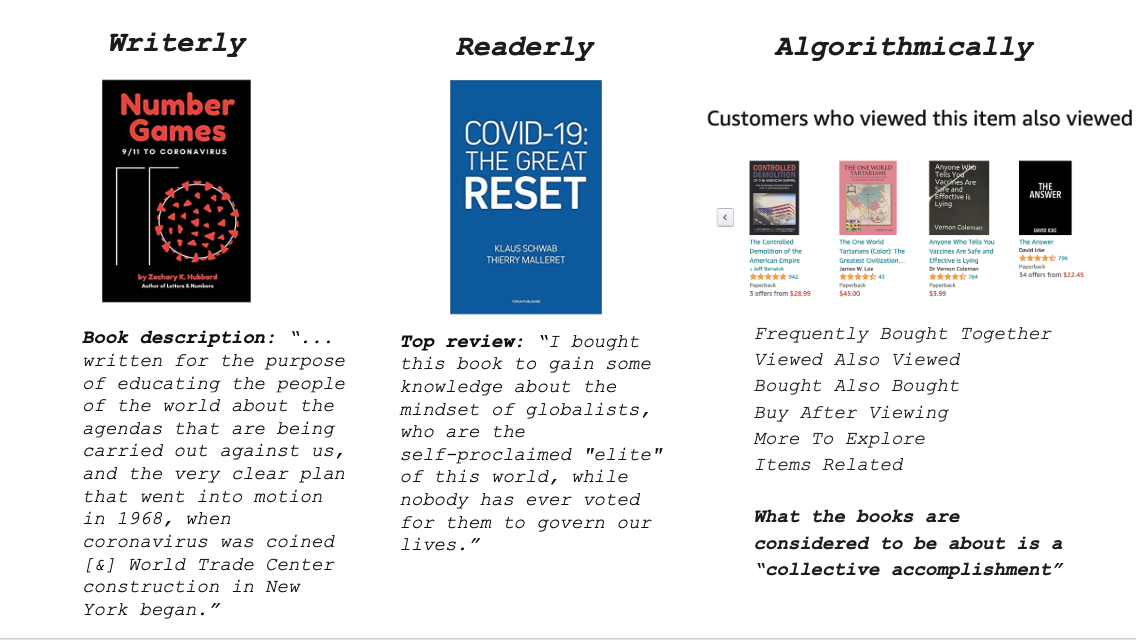

Michael Barkun suggests that conspiracy theories display three main assumptions: first, nothing happens by accident; second, nothing is as it seems; and third, everything is connected (2006, 3-4). If we take this as a guide to demarcating conspiracist from non-conspiracist material, we can see that they appear side-by-side on mainstream online marketplaces. Indeed, the recommendation algorithm for Amazon ensures that conspiracy books show up alongside non-conspiracist material in ways that create false equivalences between positions, arguments, and texts. A team of researchers on the Digital Methods Winter School at Amsterdam University (Veronika Batzdorfer, Liliana Bounegru, Yingying Chen, Tommaso Elli, Zeqing Feng, Alex Gekker, Jonathan Gray, Ekaterina Khryakova, Mingzhao Lin, Matthew Marshall, Thais Lobo, Dylan O’Sullivan, Erinne Paisley, Lara Rittmeier, Nahal Sheikh, Adinda Temminck, Marc Tuters, Fabio Votta, Arwyn Workman-Youmans, Jingyi Wu) in January 2021 usefully distinguish between books that are conspiracist because of how they are written (which they call, after Roland Barthes (1975), “writerly”), books that are connected to conspiracy through the way they are read (“readerly”), and books that are algorithmically associated with conspiracism “through an interplay between recommendation features and user practices.”

Fig. 3: Slide from “Investigating COVID-19 Conspiracies on Amazon”, Digital Methods Winter School

The researchers found that the space for consumer reviews can introduce conspiracist content to the platform even when the product is not ostensibly about conspiracy theory. Reviews for Covid-19: The Great Reset, a book by Executive Chairman of the World Economic Forum, Klaus Schwab, for example, are shot-through with a conspiracy theory that finds a sinister plan in “the great reset”. For example, one reviewer on Amazon.co.uk from 6th of September, 2020, who gave the book one star, writes, “The WEF is an exclusive club and, by its very nature, excludes the majority of the citizens of the world. It’s [sic] real aim is global control of the billions of ordinary people and the destruction of nation states. In other words, the imposition of a totalitarian government. The Great Reset is a sham of epic proportions. Read this book with extreme caution. It is a Trojan horse.” The review appears near the top because it has been voted as “helpful” by 590 people (as of the 3rd February, 2021). Another from the 8th of October, 2020, claims that “This has all been in the planning for a long time and Covid was deliberately used to force the Reset.” Other reviews mention Agenda 21 or talk about the New World Order, not as they were originally intended (Agenda 21 is the name of a 23-year-old non-binding UN resolution and “the new world order” is a phrase used by politicians throughout the twentieth century at moments when global co-operation was called for) but as they have come to signify within conspiracist circles (Agenda 21 is reimagined by conspiracy theorists as a plot by eco-totalitarians to subjugate humanity and the NWO as a totalitarian one-world government). One review from the 26th of October, 2020, points people towards the discredited disinformation film, Plandemic, offering a link in a manner that ensures the reviews operate in a similar way to social media platforms. However, these reviews are less ephemeral than social media and leave a conspiracist trace on the marketplace in surprising places. Crucially, the conspiracist reviews attached to readerly conspiracist books remain even while writerly conspiracist books and products are removed.

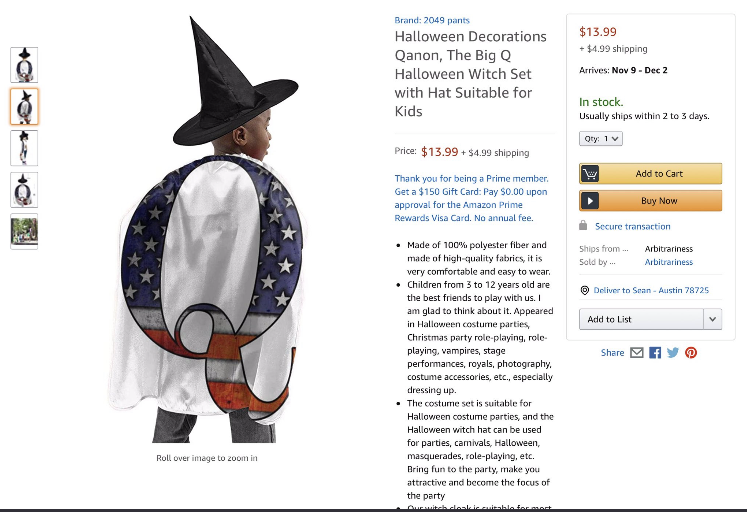

Such marketplaces offer third party sellers who do not have to be conspiracy entrepreneurs, nor have any affinity with conspiracy theorists or believers, and do not have to cultivate a following, the chance to profit from conspiracy theory. Apart from books, most of the merchandise for sale on hegemonic marketplaces are in the form of baseball caps, phone cases, or t-shirts emblazoned with QAnon emblems like the letter Q or a rabbit.

Fig. 4: Screenshot of now discontinued auto-generated Halloween costume advertised on Amazon.com

Initially, it seems as though these products are symptomatic of the shedding of explanation and political theory that Russell Muirhead and Nancy L. Rosenblum diagnose (2019, 19). However, these symbols must be understood as nodes in a distributed network of conspiracy theories and paranoid styles. While this merchandise may not itself display the qualities of what Muirhead and Rosenblum name “classic conspiracism” (29)—which for them is exactly Hofstadter’s paranoid style—it gestures towards the larger QAnon movement and its reams of “research”, which very much illustrates a belief that conspiracy is “the motive force in history” (Hofstadter 1964, 29). The theory might be “elsewhere”, that is, but this merchandise appeals and speaks to conspiracy literate consumers who know where to find it.

One way in which this merchandise significantly diverts from Hofstadter’s paranoid style, however, is that the proponents, here the merchants themselves, are far from the passionate, invested spokesperson imagined by Hofstadter. Conspiracy commerce within hegemonic online marketplaces relies on a level of mechanical or algorithmic reproduction that suggests a radical distance between merchant and merchandise, between producer and consumer, and between signifier and style. Rather than a conspiracy entrepreneur, what we have here is a conspiracy bot. In certain cases, the bot, the merchant, and therefore the platform, are radically disinterested in what the product communicates as long as the product sells. This is the case until platforms are made to care via pressure from interest groups. James Bridle writes about algorithmically-generated content and products. Automation has led to disturbing examples of t-shirts and other apparel with offensive slogans. Bridle describes a t-shirt on Amazon that reads “Keep Calm and Rape A Lot”. He writes, “Nobody set out to create these shirts: they just paired an unchecked list of verbs and pronouns with an online image generator. It’s quite possible that none of these shirts ever physically existed, were ever purchased or worn, and thus that no harm was done.” However, the point is that “the scale and logic of the system is complicit in these outputs”. These slogans are not glitches, but necessary possibilities of automation. Looking at YouTube and its hosting of unsettling algorithmically-generated content for children, Bridle calls this form of content agnosticism “infrastructural violence”.

The content agnosticism and the logic of infrastructural violence evident in this algorithmic generation of Q content are key elements of the commodification of conspiracy theory and the proliferation of distributed and networked paranoid styles today. It may be true that crowd-sourced conspiracy theories like QAnon represent a collapse in the traditional distance between producers and consumers, but conspiracy commerce on mainstream marketplaces present us with a stark gap between conspiracy theorists (many of whom are deeply invested in the alternative cosmologies offered by the theories they engage with) and the merchants that seek to capitalise on that engagement. There is perhaps an even deeper chasm between conspiracy consumers and the platforms whose business models depend on attention and engagement regardless of content (beyond what that content can contribute to profitable audience profiling).

The House Always Wins

During the last few years, and with more urgency since the Covid-19 pandemic and the storming of the US Capitol in January 2021, mainstream social media platforms and marketplaces have responded to political and public pressure by deplatforming and de-amplifying certain conspiracist content. Lest we consider this a purely altruistic act of corporate social responsibility, such measures are as much a cost-based strategy as previous adherence to content agnosticism. The platforms presumably decided that the negative publicity from complacency towards disinformation with connections to violent or deadly outcomes would be more damaging than the loss in revenue from deplatformed conspiracy content, traffic, and merchandise. While content agnosticism is crucial in a platform’s early days of consolidation, platforms can afford to be more discerning once users are locked in through social ties. This is why newer platforms like Gab welcomed QAnon when more established platforms were taking action against it.

In general, user attention, engagement, and traffic are valuable to online platforms regardless of what is holding that attention or generating engagement because those platforms are reliant on data extraction, using “tracking infrastructures and practices that underpin audience commodification” (Bounegru, forthcoming). Crucially, data about users have, according to scholars like Shoshana Zuboff (2019), been fed back into the system to not only serve targeted adverts, but also predict and modify human behavior. This means that platforms can encourage the continued engagement and attention of users to generate ever more user data in an optimised feedback loop. Under “surveillance capitalism” (Zuboff 2019), conspiracy content and paranoid styles are commodified by infrastructure that is only concerned with content and style in so far as they generate a user profile that can then become part of a dataset sold to data brokers or used for in-house advertising. To illustrate the depths of this content agnostic approach, we only need to consider the case of Facebook including the category “anti-Semite” for potential advertisers to target until it was brought to their attention. Facebook’s defence rested on the fact that the category had been generated by an algorithm—as though the role of AI absolves the platform that utilises it of responsibility. An algorithm that recognises any marketing category no matter how problematic is a design choice. The paranoid style in the age of datafication is broken down into a series of zeros and ones, ready to be monetised not this time by conspiracy entrepreneurs, but surveillance capitalist platforms.

Social media platforms, search engines, data brokers, and any other entities whose business models rely on the efficacy of data extraction infrastructure stand to gain the most in financial terms from the proliferation of online paranoid styles in the datafied era. The Center for Countering Digital Hate estimates that, for example, David Icke’s following alone could be worth up to $23.8 million in annual revenue for tech platforms (a figure which reflects both income generated by advertisers to Icke’s followers and the money Icke spends on advertising). Moreover, researchers have shown that the novelty of false rumours (of which conspiracy theories are a subcategory) ensures that they travel faster, farther and deeper than the truth on Twitter (Vosoughi, Roy & Aral 2018). This speed and reach means that disinformation is generating a great deal of monetisable attention and engagement for platforms.

Being content agnostic in terms of advertising is also profitable. A report on Buzzfeed shows how Facebook profits from disinformation adverts. For example, it banked “almost $10 million in advertising revenue from the Epoch Times, a pro-Trump media organization that spreads conspiracies, before banning the outlet’s ads for using fake accounts and other deceptive tactics.” The report collates previous Buzzfeed investigations to remind its readers that in 2020, Facebook “took money for ads promoting extremist-led civil war in the US, fake coronavirus medication, anti-vaccine messages, and a page that preached the racist idea of a genocide against white people, to name a few examples.” Even when checks are put in place, they are often conducted by, as Cory Doctorow points out, “low-paid, unempowered contractors.” Far from anomalies, adverts for disinformation and scams are endemic [on Facebook], arising from “a deliberately constructed system designed to maximize profits from [such] ads.”

Equally, while we have outlined the problems faced by conspiracy entrepreneurs when they are banned from crowdfunding platforms, the latter benefit from an ad-hoc and inconsistent approach to content moderation and deplatforming. According to a report by Disinfo.eu, crowdfunding platforms rely heavily on user reporting to moderate content. On Patreon, for example, conspiracy entrepreneurs can publish private posts to their financial supporters who are less likely to report content that violates community guidelines. “This effectively creates a loophole whereby users can spread and finance disinformation without moderation.”

To give another example of platforms profiteering from disinformation Liliana Bounegru, Jonathan Gray, and Tommaso Venturini find that “US and Western European advertisers, marketers and technology companies that dominate online audience markets as well as associated tracking infrastructures . . . participate in the monetisation of junk and mainstream news alike” (2020, 333). Indeed, trackers operate indiscriminately across the (dis)information ecosystem. While scandals regarding fake news (the focus of their article) and other forms of disinformation have “prompted numerous remedial projects, policy consultations, startups, platform features and algorithmic innovations . . . there is also a case for—to paraphrase [Donna Haraway]—slowing down and dwelling with the infrastructural trouble” (334). Only by taking this time will we be able to begin to imagine how to “re-align infrastructures with different societal interests, visions and values” (334). In the case of conspiracy content and paranoid styles, this might mean countering content agnostic design from the beginning rather that after data harms have been committed, but also reimagining the relationships between users, data, infrastructure, and content.

We know, therefore, that all kinds of digital platforms, data brokers and ad-tech infrastructure profit from disinformation like conspiracy theories. But there is an irony in operation here, for the story of surveillance capitalism put forth by Zuboff holds certain similarities to the paranoid style. The subtitle of her book is The Fight for a Human Future at the New Frontier of Power and she locates the exact nature of the exploitation as “the rendering of our lives as behavioral data for the sake of others’ improved control of us” (2019, 94). Zuboff names and shames the enemy: “The world is vanquished now, on its knees, and brought to you by Google” (2019, 142). Ultimately, Zuboff warns readers of the unprecedented asymmetric power yielded by data capitalists with the ominous repeated refrain: “Who knows? Who decides? Who decides who decides? [italics in original]” (521), a phrase which echoes the graffiti derived from Juvenal— “Who watches the watchmen?”—in Alan Moore and Dave Gibbons’s graphic novel of conspiracy and paranoia, Watchmen (1987). What is at stake, Zuboff writes, “is the human expectation of sovereignty over one’s own life and authorship of one’s own experience ” (522). She warns “those who would try to conquer human nature” that they should expect to “find their intended victims full of voice, ready to name danger and defeat it” (525).

Some might regard such rhetoric “overheated, oversuspicious, overaggressive, grandiose, apocalyptic” and characterised by righteousness and moral indignation (Hofstadter 1964, 4). Her central image is certainly “that of a vast and sinister conspiracy, a gigantic and yet subtle machinery of influence set in motion to undermine and destroy a way of life” (29). Crucially, however, we cannot accuse Zuboff of succumbing to the tell-tale “curious leap of imagination” (37), nor of believing that conspiracy is the motivating force in history (for Zuboff, a more likely candidate would be technology). While individual actors like Eric Schmidt or Mark Zuckerberg certainly make decisions and come to represent a shift towards the worst exploitations of data extraction, Zuboff’s analysis points towards a structural condition or economic paradigm that is enabled by digital affordances and data markets. We point out the close rather than distant relationship between conspiracy theory and more legitimate ways of thinking and interpreting because it helps us to understand how difficult it has become to say anything in the public realm that cannot be presented or dismissed as a conspiracy theory; but also because legitimate and “illegitimate” ways of knowing stem from the same irreducible crisis of authority and are therefore not distinct opposites (see Birchall 2006). The need for people to recognise surveillance capitalism as an urgent and legitimate problem is what makes Zuboff’s rhetoric so impassioned and emphatic in the first place, but when her rhetoric veers into the territory of the paranoid style of a conspiracy theory, we face the possibility that all knowledge is only ever theory, only style. The danger of such alarmist writing is that it misses the precise ways in which we live alongside and inside and are subjectivized within “data worlds” (Gray 2018). It obscures “how data infrastructures may be involved in not just the representation but also the articulation of collective life” (Gray 2018). Quinn Slobodian asks, “Isn’t the precise characteristic—the secret even—of this mode of accumulation that we are not actually dispossessed or extracted, but that we get to keep our own feelings even as Google gets them too?” If there is some kind of generalised conspiracy (built into operating systems and infrastructure) against users to commodify attention, that attention can also forge meaningful connections and groupings. This would have to include those conspiracist communities, or counterpublics, that coalesce around the most cynical (or even the most algorithmically governed) conspiracist marketplaces and social media spaces.

There are policy recommendations and infrastructural fixes that could interrupt the commodification of online conspiracism should we think it pertinent. We have seen that deplatforming conspiracy entrepreneurs from the main social media platforms has certainly made it more difficult to make money. Avaaz promotes demonetizing disinformation by demoting and decelerating disinformation actors. It argues that this method does not impact on free speech, it simply disincentivizes users to promote misleading content. The Global Disinformation Index encourages brands to put pressure on the ad-tech industry, particularly ad exchanges, to not allow their adverts to appear on domains that contain disinformation. Facebook, Google and Twitter agreed a joint statement with the government in the UK that “no user or company should directly profit from Covid-19 vaccine mis/disinformation”, although a report by the Bureau of Investigative Journalism found violations of this agreement on a mass scale. This demonstrates the limitations of voluntary agreements.

Deplatforming conspiracy entrepreneurs from hegemonic social media and online marketplaces might be good publicity for those platforms. But it is the “passive ecosystem” that requires most attention. This includes “the mechanisms that allow this content to be hosted and spread, and sometimes to hide ownership, such as DNS infrastructure, adtech, and algorithmic recommendation.” It is infrastructural violence that needs to be addressed. As Bounegru, Gray, and Venturini (2020) point out, it is not just a case of optimizing infrastructure, and we would add of deplatforming individuals, but of reassessing the political economy that lies behind and shapes infrastructural choices. At the social level, it is also about rethinking the role that experts, knowledge and trust should play in the information ecology and whether there are other ways to meet the concerns, fears, and needs that are currently being met through consuming conspiracy.

Conspiracy Capitalism?

It is tempting to call the different commercial techniques, relations, and exchanges we have written about in this article “conspiracy capitalism.” However, capitalism is expansionist by definition, reliant upon creating new “needs” and finding new markets. Paranoid styles and conspiracy theories operate at affective and cognitive levels, accelerating agitations that require relief, beliefs that seek corroboration, and grievances that crave reparation or validation. While there is ethnographic work to be done on the precise ways in which people consume (and prosume) conspiracist content, we can recognise that commodified media content, personalities, and goods play their parts in those processes. If there is little new here for capitalism, which has always exploited fears and created needs, it is important that we still recognise how the conspiracy market has developed. Doing so can tell us much about the role of curated personality and influence in online commerce and the (dis)information ecology as well as the priorities and protocols of tech platforms.

References

Barthes, Roland. 1975. S/Z. Translated by Richard Miller. London and New York: MacMillan.

Bergmann, Erikurr. 2018. Conspiracy and Populism: the politics of Misinformation. London: Palgrave Macmillan.

Birchall, Clare. 2006. Knowledge Goes Pop: From Conspiracy Theory to Gossip. Oxford: Berg.

Bounegru, Liliana. Forthcoming. Digital Methods for News and Journalism Research. London: Polity.

Eatwell, Roger and Matthew Goodwin. 2018. National Populism: The Revolt Against Liberal Democracy. London: Penguin.

Gray, Jonathan. 2018. “Three Aspects of Data Worlds.” Krisis 1. https://archive.krisis.eu/three-aspects-of-data-worlds/

Gray, Jonathan, Liliana Bounegru and Tommaso Venturini. 2020. “‘Fake news’ as Infrastructural Uncanny.” New Media and Society 22(2), 317–341. https://doi.org/10.1177/1461444819856912

Hofstadter, Richard. 1964. The Paranoid Style in American Politics and Other Essays. Cambridge, Mass.: Harvard University Press.

Knight, Peter. 2000. Conspiracy Culture: From Kennedy to The X-Files. London: Routledge.

Moore, Alan and Dave Gibbons. 1987. Watchmen. New York: DC Comics.

Muirhead, Russell and Nancy L. Rosenblum (2019) A Lot of People are Saying: The New Conspriacism and the Assault on Democracy. New Jersey: Princeton.

Nagle, Angela. 2017. Kill All Normies: Online Culture Wars from 4Chan and Tumblr to Trump and the Alt-Right. Winchester: Zero Books.

Ritzer, George and Nathan Jergenson. 2010. “Production, consumption, prosumption: The nature of capitalism in the age of the digital ‘prosumer’.” Journal of Consumer Culture 10(1): 13–36.

Rogers, Richard. 2020. “Deplatforming: Following Extreme Internet Celebrities to Telegram and Alternative Social Media.” European Journal of Communication 35(3): 213-229.

Vosoughi, Soroush, Deb Roy, and Sinan Aral.2018. “The Spread of True and False News Online.” Science. 9 March: 1146-1151.

Ward, Charlotte and David Voas. 2011. “The Emergence of Conspirituality.” Journal of Contemporary Religion, 26(1): 103–21.

Zuboff, Shoshana. 2019. The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power. London: Profile.

This post is published under the terms of the Attribution-NonCommercial-NoDerivatives 4.0 International (CC BY-NC-ND 4.0) licence.